What AI-Native Veterinary Software Should Mean, And Why the Label Is No Longer Enough

By mid-2026, every serious veterinary software vendor calls itself AI-native. Digitail says it on the homepage. Lupa raised $25M on the label. PawthosX claims to be the first AI-native operating system built for veterinary practices. NectarVet, Vetspire, same story.

So the label has stopped doing any real work. What matters now is what the AI actually does when a clinician is mid-consult, writing a SOAP note, or trying to remember a drug interaction for a breed they haven't treated in six months.

That distinction, between documentation AI and clinical AI, is what the phrase 'AI-native' should have always pointed toward. And it's the distinction Bittsi is built around.

The Problem With 'AI-Native' in 2026

When the phrase first circulated, it meant something. It distinguished software built with AI at the core from legacy systems that bolted a feature on. That was a real and useful distinction.

It no longer is. The term has been adopted so widely that it describes almost nothing specific. A tool that generates notes after you click a button can call itself AI-native. So can a chatbot that answers isolated questions, or a reporting dashboard that surfaces historical data. All of them, technically, have AI inside.

The more useful question has shifted: what does the AI actually do, and when does it do it? That shift is already shaping how teams think about AI in veterinary practice management, where the focus is moving from features to real clinical impact.

Two Very Different Kinds of AI in Veterinary Practice

Most AI tools in the veterinary space fall into one of two categories. Understanding the difference is how clinics avoid buying a documentation upgrade when they need a clinical partner.

Documentation AI, faster notes, same workflow

This is the category that most 'AI-native' claims actually describe. The tool listens to a consultation and produces a SOAP note. It's genuinely useful, it removes a real burden from veterinarians who currently spend hours after clinic hours catching up on records. This is where an AI scribe for veterinary clinics becomes valuable, reducing documentation time without changing the structure of the workflow itself.

But it doesn't change what happens during the consultation. It doesn't surface a drug interaction. It doesn't flag that the dose is outside normal range for the patient's weight. It doesn't know that this breed has elevated risk for the condition being discussed. It processes language and produces structured text, quickly, reliably, and at scale.

HappyDoc, Scribenote, VetRec, ScribbleVet, these are the leading examples. They are purpose-built for documentation. They are good at it. And they are not a clinical AI strategy.

Clinical AI, intelligence that participates in care

Clinical AI operates differently. It doesn't just transcribe what was said, it understands what it means, and contributes what the clinician needs to know before the decision is made.

This includes:

• Medication safety checks, flagging interactions, dosing range alerts, and contraindications in real time

• Toxicology protocols, surfacing exposure guidelines and treatment paths during the consultation, not after

• Breed-specific risk alerts, surfacing known genetic or anatomical risk factors relevant to the case

• Lab pattern analysis, identifying patterns across a patient's history that may not be visible in a single result

This is what Sage does. And no competitor currently leads with any of it.

What This Means for How 'AI-Native' Should Be Defined

If AI-native is going to mean anything defensible in 2026, it has to mean more than 'we have AI inside.' The definition has to be grounded in clinical function, what the AI knows, what it can catch, and when it shows up.

A system is genuinely AI-native when:

• It understands clinical context, not just clinical language

• It participates in decisions, not just in documentation

• It reduces the probability of a clinician missing something important, not just the time spent writing notes

By that definition, most tools currently claiming the label don't qualify. They are documentation tools with an AI layer, genuinely useful, but categorically different from a system designed to think alongside the clinician.

Why the Scribe Category Is Not Bittsi's Competitor

It's tempting to frame AI scribes as competitors because they operate in the same space and use similar language. But the strategic reality is different.

Scribes are attachments. They work with a PIMS, they don't replace one. HappyDoc integrates with Avimark, Cornerstone, ezyVet, and ImproMed. Scribenote's free tier pulls adoption across any system. VetRec connects via API. Their business model depends on running alongside a PIMS, not replacing it.

Bittsi's position is the PIMS that makes a standalone scribe unnecessary, because documentation happens natively, and the AI goes further than documentation can.

Where AI-Native Systems Show Up in Daily Clinic Work

The impact isn't in a single feature, it's in moments that repeat across the day.

During consultations

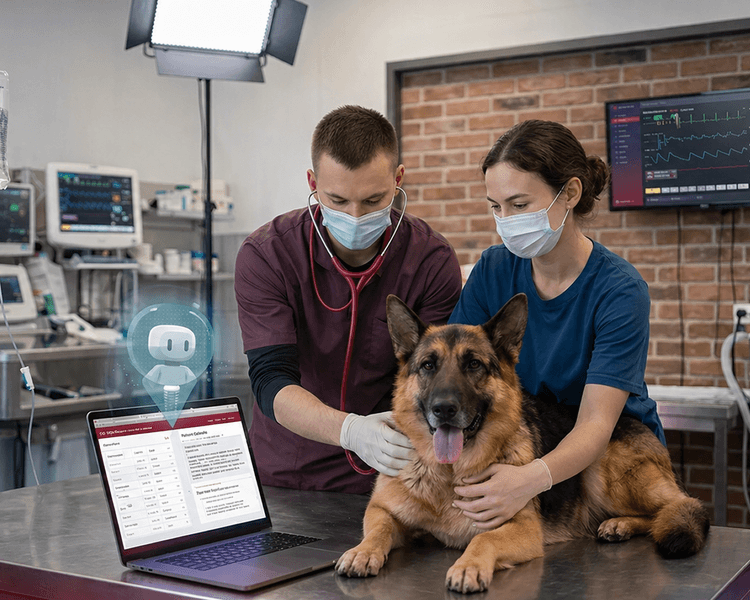

SOAP notes take shape as the conversation happens through real-time SOAP note generation with AI, removing the documentation backlog that builds through a busy schedule. But beyond documentation, Sage surfaces relevant clinical information through an embedded AI agent for veterinary workflows, including drug safety, breed risk, and protocol guidance, without requiring the clinician to stop and search.

Between appointments

Patient history, previous visit summaries, and lab patterns are available without manual searching. The system understands context across visits, not just within a single session.

In high-pressure environments

In emergency settings, a context where AI in emergency veterinary clinic environments is becoming increasingly critical, priorities shift rapidly and clinical decisions carry higher stakes, making the difference between a documentation tool and a clinical AI immediately visible.

Is Clinical AI Right for Every Practice?

Not immediately. Smaller practices with stable workflows and low patient volume may not feel the pressure that makes clinical AI valuable. In those environments, documentation relief alone may be the dominant need.

But in practices where:

• Appointments run tightly back-to-back

• Multiple veterinarians are working simultaneously

• Complex cases require fast cross-referencing of drug interactions or protocols

• Staff turnover creates knowledge gaps that the system needs to cover

the distinction between a documentation AI and a clinical AI becomes concrete. One speeds up paperwork. The other changes the quality of care that's possible under time pressure.

The Definition That Actually Matters

'AI-native' stopped being a differentiator the moment every vendor adopted it. What matters now is the substance behind the label, and specifically, what the AI can do when a patient is on the table and a decision needs to be made.

Documentation is a solved problem. The speed gains from AI scribes are real and the market has priced them in. The next question is whether the AI knows what the veterinarian knows, or can surface what they don't.

That’s the gap Sage is built to close, and the gap that will define which platforms actually matter over the next few years.